The other day my students were asking about Covariance/Contravariance, they were visibly frustrated because they were trying to grasp them at once together with covariance/contravariance modifiers on generics. While trying to explain the concept without generics, I came up with an example on the spot about inheritance that didn’t work, so they were even more frustrated. Because the example not only didn’t make a good case for covariance/contravariance, it didn’t work as an example of inheritance either.

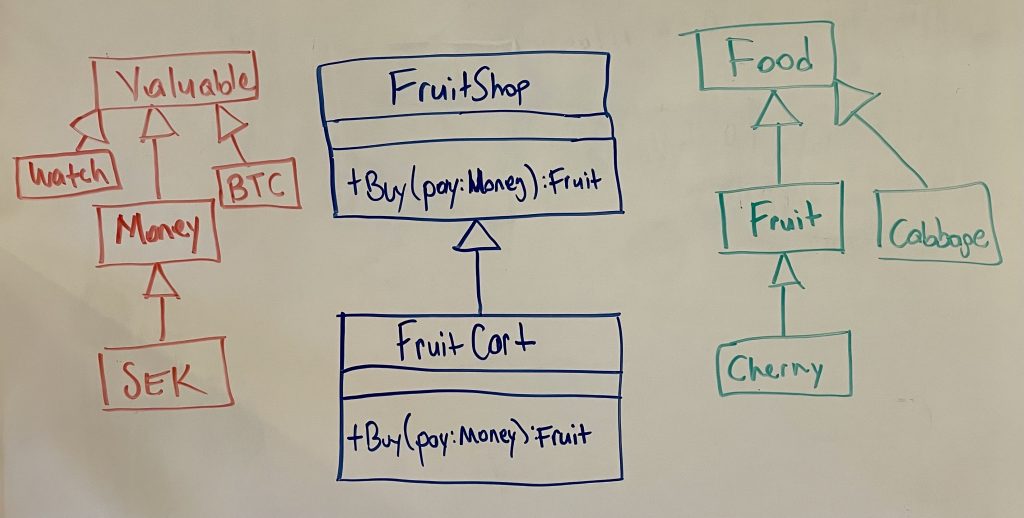

First, let me give a good example of covariance/contravariance that I gave to my students in the second try:

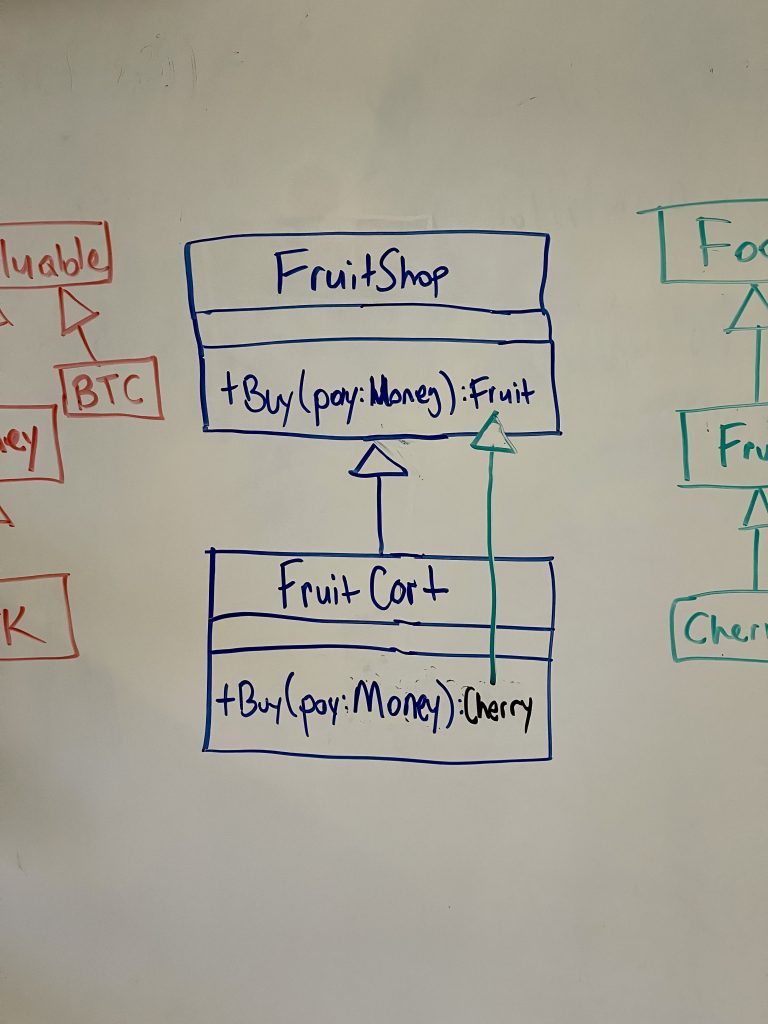

FruitCart is a FruitShop but it is portable. And it does everything a FruitShop promises to do:

- If you give it Money, it gives you Fruit.

It’s what we call invariant. The type of the input and output parameters do not change.

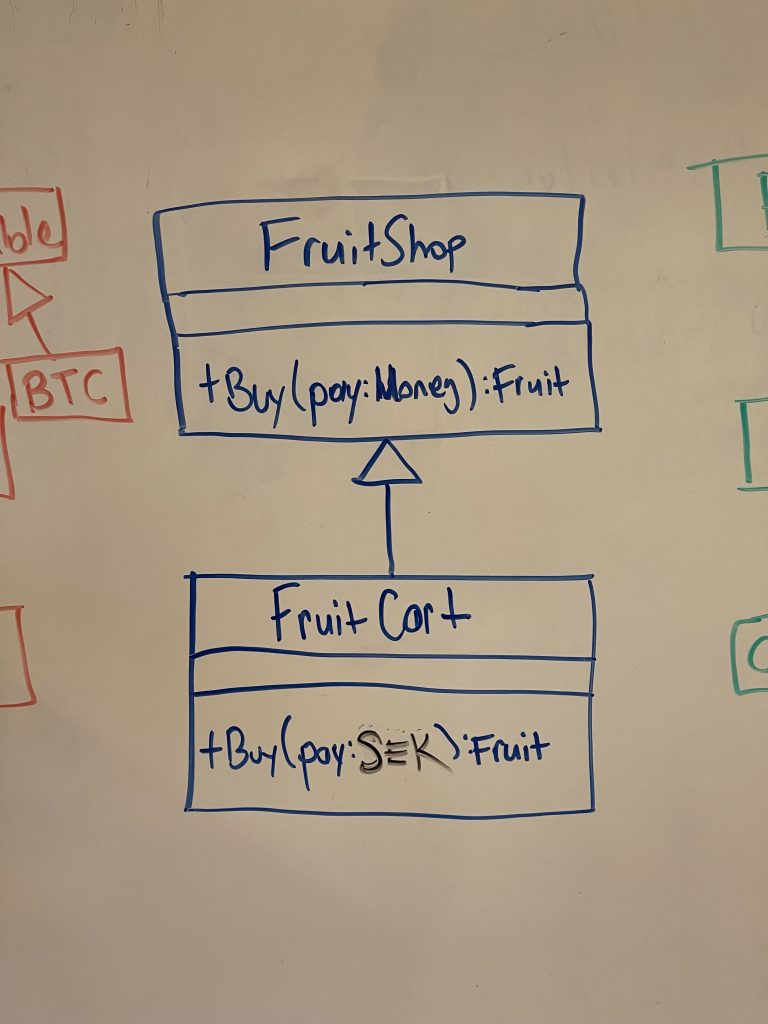

So it’s all good. But let’s say we want to be more specific about the currency the FruitCart will accept. Instead of a general Money, it wants only SEK.

Which might make sense at first, because why not, a portable stand may not have access to exchange rates, etc. But it’s against the the substitutability principle. Because now the promise

- If you give it Money, it gives you Fruit.

is not kept. You can’t give it any Money, it asks for Swedish Kronor. It’s not substitutable for a FruitShop, because it can’t do everything a FruitShop does. Specifically, it can’t take EUR and give you Fruit.

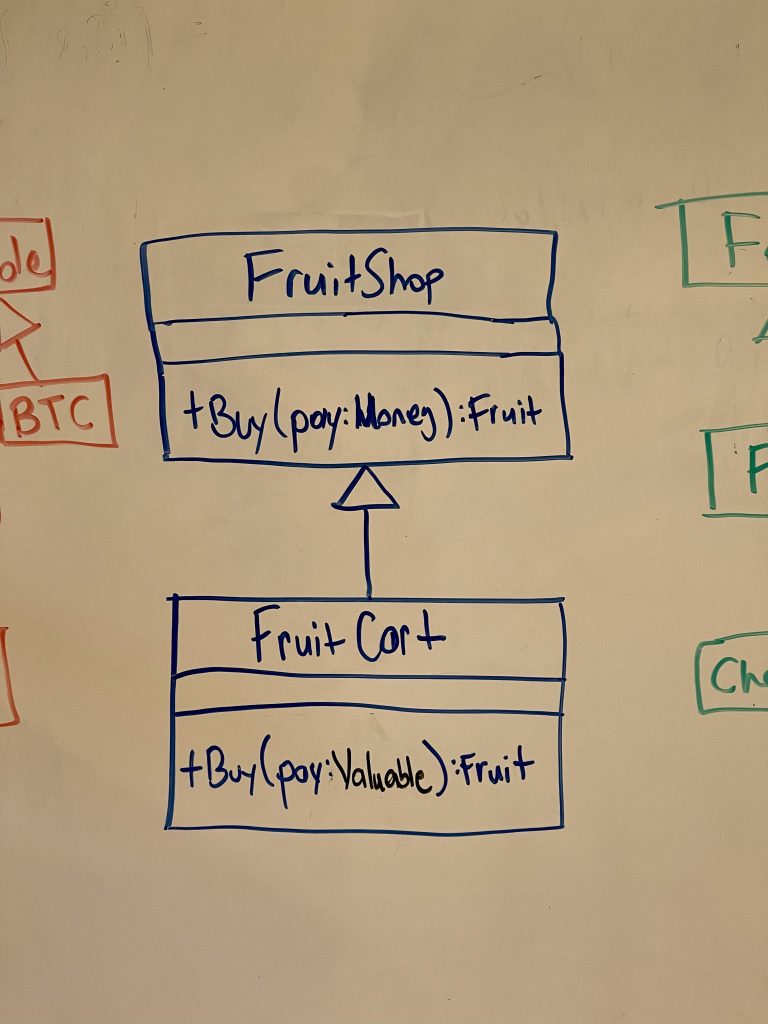

What if the FruitCart wanted to be more general about what it accepts, would that work?

Yes. Because now FruitCart holds the promise

- If you give it Money, it gives you Fruit.

Since Money is Valuable, FruitCart will be able to honour the promise. As well as accepting other things such as watches and Bitcoin, it still accepts Money.

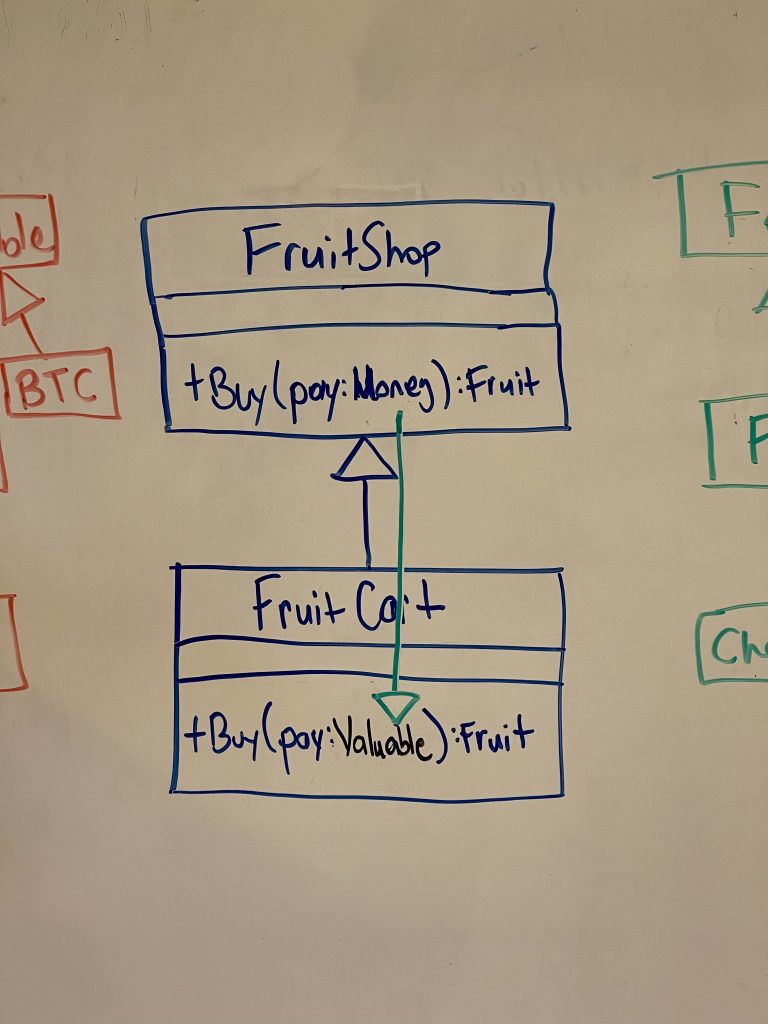

To summarize: As the host type gets more specific (FruitShop to FruitCart), the input type of a method can get more general (Money to Valuable). As one got more specific, the other can get more general. They can vary in the opposing directions. Input types are contravariant. It helps me to visualise it by drawing a second arrow showing the is-a relation of the input parameter type:

How about the output parameter type Fruit? If FruitCart returned Cabbage instead of Fruit, would it still be a FruitShop? No. Because the promise:

- If you give it Money, it gives you Fruit.

Is not kept. Because Cabbage ain’t Fruit. But Cherries are:

Cherries are Fruit, therefore the promise is kept. FruitCart is just a specific type of FruitShop that only sells cherries.

To summarise: As the host type gets more specific (FruitShop to FruitCart), the output type of a method can get more specific (Fruit to Cherry). As one got more specific, the other gets to be more specific as well. They can vary in the same direction. Output types are covariant.

Now back to my bad example.

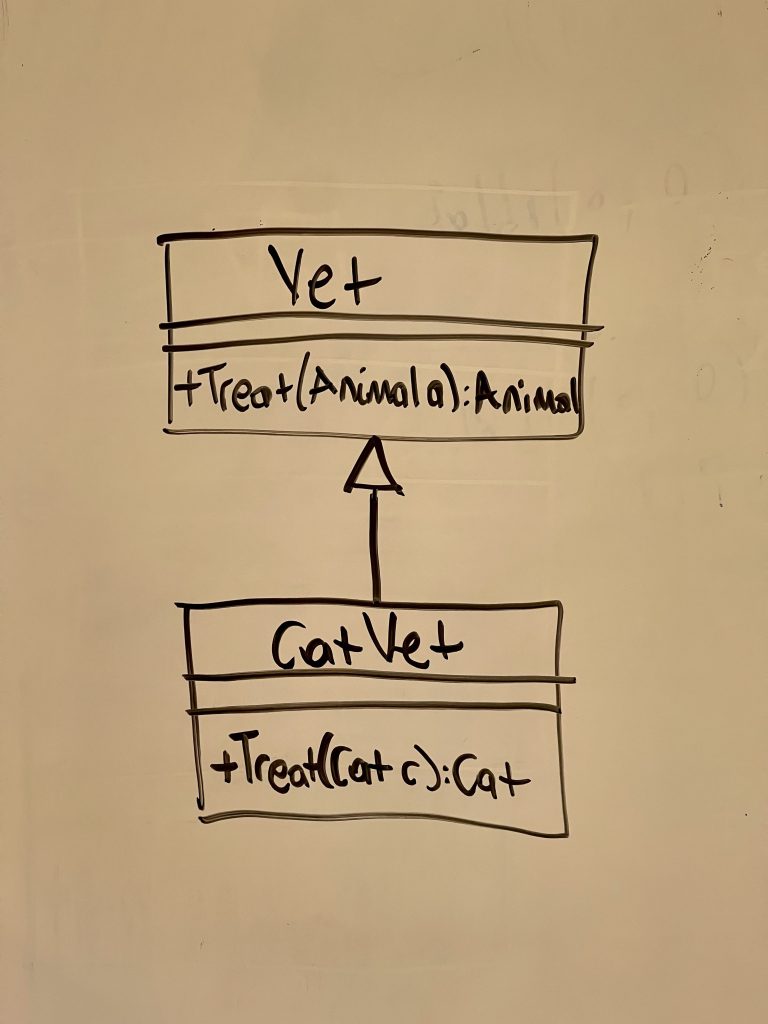

The idea was that a CatVet inherits from Vet and modifies the parameter types so it only treats cats:

The output type would not have been a problem because Cat is an Animal, but the input type is the problem: CatVet does not accept other kinds of Animals. Therefore:

CatVat is not a Vet.

Because it can’t treat Dogs or Crocodiles, which are also Animals.

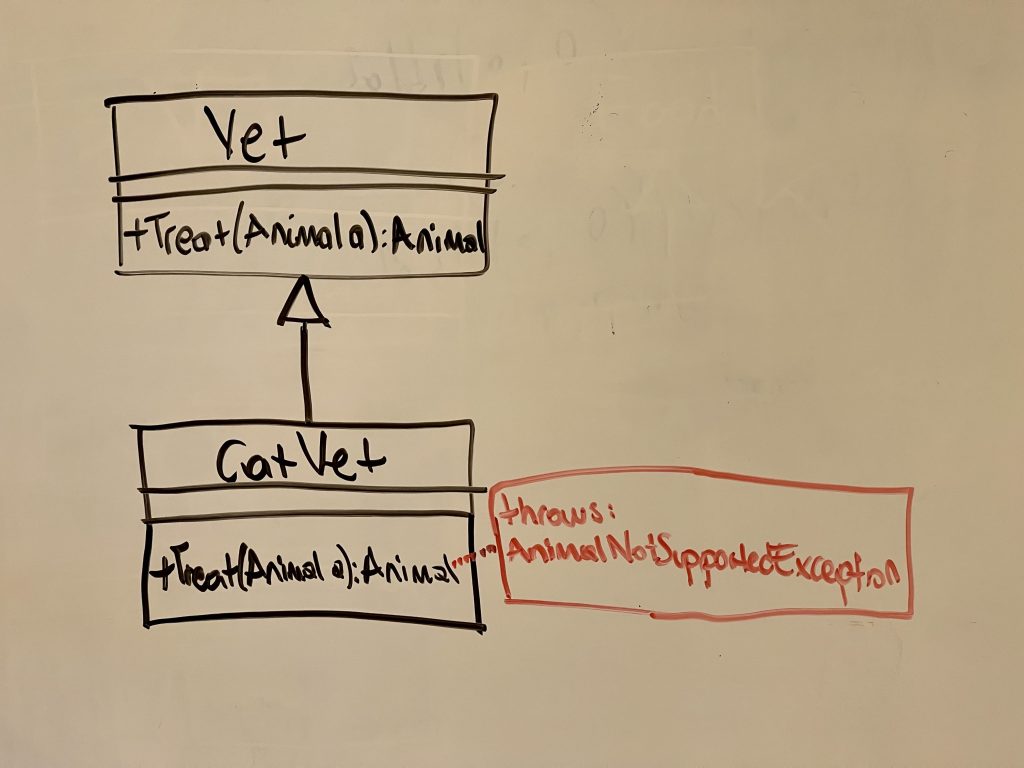

What if we tried to work around it by being smart about the type at runtime? We can keep the input type Animal but make it throw an exception if it’s not a Cat.

Then we only appear to hold the promise only to walk back on it while the code is running. We can fool the compiler but we can’t fool the nature of generalisation. This bleeding abstraction will taint every code that touches it. Consider the following example: CatVat objects can be passed around as Vets, so a dog owner needs to always make sure she’s not dropping off her dog at a CatVet:

Dog d = new Dog("Muttley");

c.Adventure() // Muttley hurts himself

Vet v = VetPhonebook.GetAVet();

if (v is not CatVet)

var treated = v.Treat(d);So even code that has nothing to do with Cats or CatVets has to think about the CatVet. This is bad in many ways:

- Adds extra code that isn’t related to the task at hand (less cohesion)

- Introduces a type dependency to CatVet where it is not needed (more coupling)

- Another thing to test, so increases number of tests and weighs down the codebase

All because we wanted CatVet to be a Vet. Why, though? Usually it’s because we want to use inheritance for reusing code. A lot of the things a Vet has/does will be common to a CatVet, like having a physical space, reception, waiting room, drugs, etc. So it is easy to exploit inheritance for reusing code. But when generalisation does not exist at the same time, it is only a lazy take. I know this but I often forget because it’s so easy to be lazy.

When I teach inheritance, I don’t skip a compilable but bad example:

class Animal { string Name; }

class Fruit : Animal {}The code above compiles and will run if you make it run. It does have inheritance as well. But it doesn’t have generalisation. The point is, having inheritance doesn’t give you the subtype relationship. And every time we deal with abstraction in OOP, we talk about the subtype relationship, not inheritance.